As the saying goes, everything is a model. Any non-trivial system can then be modeled using a variety of models, each one focusing on a useful perspective. Models will be of different types and at different abstraction levels.

But all these models are useless by themselves. To be useful, we must be able to operate on them. We want to merge, align, refactor, refine, … them depending on our high-level goal. For instance, if the goal is to generate a running version of the system we will typically merge high-level models and then refactor and refine them until we get an executable system representation.

All these operations are defined as model transformations. As such, model transformations are a key ingredient of any model-driven engineering process.

Model transformations can be Model-to-Model (M2M) or Model-to-Text (M2T) transformations. In the former, the input and output parameters of the transformation are models, while in the latter, the output is a text string. M2M are the focus on this post.

How do model-to-model transformations work?

The simplest type of M2M gets a single model as input and produces a single model as output. But we could have also one-to-many and many-to-one transformations (e.g. when merging a set of models into a unified one). M2Ms can also be classified based on whether the two models are of the same type (endogenous vs exogenous), whether the input and output model are the same (out-place vs in-place). See this survey for a complete overview of all flavours of model transformations.

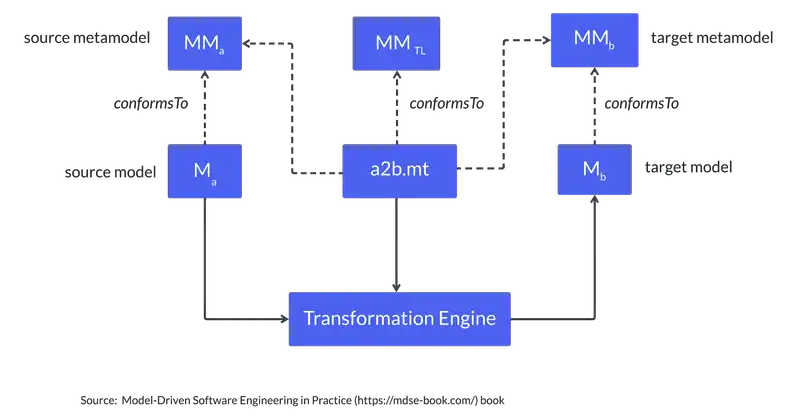

The following figure (taken from the Model-Driven Software Engineering in Practice book) shows the basic schema of a model transformation. The transformation specification is defined between two types of models. Then, given an input model (conforming to the source metamodel), the transformation engine generates an output model (conforming to the target metamodel).

What transformation languages and tools are available?

There are dozens of transformation tools, each of them specialised in particular transformation scenarios. Most of them go for rule-based approaches, where each transformation rule is composed of a matching pattern (to identify subsets of the source model to be transformed) and a subsequent set of actions to perform on the match. It is often up to the transformation engine to choose the rule application order.

Transformation languages also favor declarative approaches where you define how the target model should look like depending on the elements found in the source model. But this is often complemented with some imperative constructs that enable transformation designers to deal with more complex transformation scenarios.

Among all the options I’d like to highlight the three following ones: ATL (the first really popular transformation tool though it is not what it used to be), ETL (my go-to option if you’re looking for a powerful tool with great support and even an online playground to give it a try) and QVT (the standard option). QVT has two language versions, a declarative and an imperative one. The latter is the only one with good support so if prefer to choose an option backed up by a standard committee and are happy to “go imperative”, QVT would be your choice.

But you could just use any programming language. On the positive side, you won’t need to learn a new language and tool. On the negative side, you will not have all the transformation primitives that facilitate the writing of transformations. This article offers a more comprehensive discussion on the pros and cons of this choice.

Couldn’t I just import a transformation?

You may be wondering whether you really need to create your own transformation at all. Isn’t there some kind of transformation marketplace where you could go to check if the transformation you need is already available?

Unfortunately, the answer is no. We had some initiatives trying to collect popular transformations (e.g. see the ATL Transformation Zoo) but nothing “serious” enough for industrial use. It’s not really a technical problem, more of a maturity one. As we discussed in a previous post, we still have a lot of work to do on making modeling more transparent.

Therefore, I’m afraid for now you’ll still need to try the languages mentioned above and get your hands dirty.

Unless you’ve got enough data, i.e. examples of pairs of input and target models. If so, you could always try to let a neural network learn the transformation rules automatically!